WHERE product_id IN (SELECT product_id FROM vector_matches)ĪND list_price >= $4 AND list_price <= $5 # that are most closely related to the input query. # Use cosine similarity search to find the top five products You can use the RecursiveCharacterTextSplitter method from LangChain library, which provides a nice and simple way to split the large text into smaller chunks.

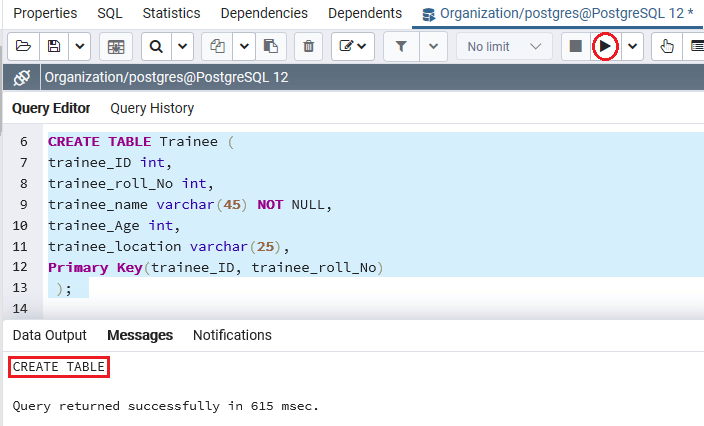

Split long text into smaller chunks with LangChain Therefore, as a first step, split long product descriptions into smaller chunks of 500 characters each. At publication, the Vertex AI Text Embedding model only accepts 3,072 input tokens in a single API request. We use the Vertex AI Text Embedding model to generate the vector embeddings for the text that describes various toys in our products table. Generating the vector embeddings using Vertex AI Tuples = list(df.itertuples(index=False))Īwait py_records_to_table('products', records=tuples, columns=list(df), timeout=10) # Copy the dataframe to the `products` table.

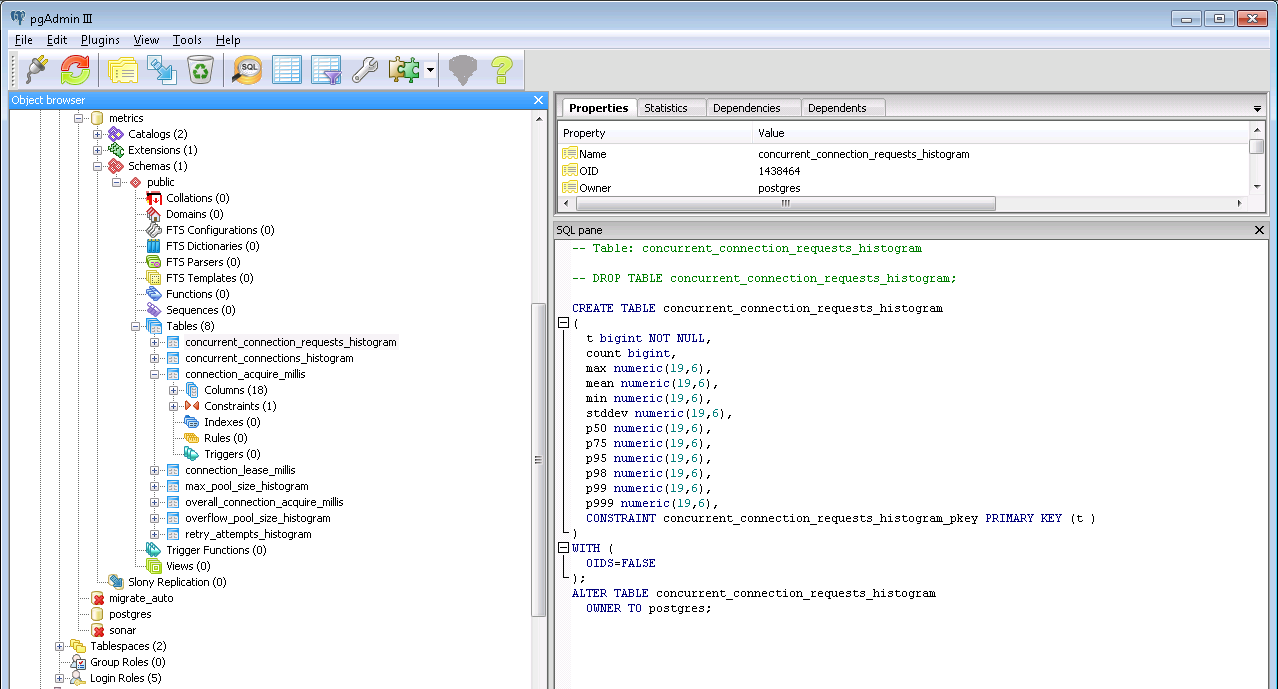

# Create connection to Cloud SQL databaseĬonn: asyncpg.Connection = await nnect_async(į"", # Cloud SQL instance connection nameĪwait conn.execute("""CREATE TABLE products( # Save the Pandas dataframe in a PostgreSQL table.įrom import ConnectorĪsync with Connector(loop=loop) as connector: The first five rows of the dataset are shown for your reference below. The dataset used in this notebook has only about 800 toy products, while the public dataset has over 370,000 products in different categories.Īfter you set up the environment using the steps mentioned in the Colab notebook, load the provided sample dataset into a Pandas data frame. The dataset for this notebook has been sampled and created from a larger public retail dataset available at Kaggle. The sample application uses an example of an e-commerce company that runs an online marketplace for buying and selling children’s toys. You may be eligible for a free trial that gets you credits for these costs. Running the notebook may incur Google Cloud charges. Note that if an instance with the required name does not exist, the notebook creates a Cloud SQL PostgreSQL instance for you. You can directly run this sample application from your web browser without any additional installations, or writing a single line of code!įollow the instructions in the Colab notebook to set up your environment. The entire application is available as an interactive Google Colab notebook for Cloud SQL PostgreSQL. We’ll also use LangChain, which is an open-source framework that provides several pre-built components that make it easier to create complex applications using LLMs. Let's get started with building our application with pgvector and LLMs. We use the cosine similarity search operator for our sample application. Cosine similarity is a good choice for applications where the direction of the vectors is important - for example, when trying to find the most similar document to a given document for implementing recommendation systems or natural language processing tasks. ‘’: returns the cosine distance between the two vectors. Euclidean distance is a good choice for applications where the magnitude of the vectors is important - for example, in mapping and navigation applications, or when implementing the K-means clustering algorithm in machine learning. ‘’: returns the Euclidean distance between the two vectors. The pgvector extension also introduces new operators for performing similarity matches on vectors, allowing you to find vectors that are semantically similar. New similarity search operators in pgvector If you do not have an existing instance, create one for Cloud SQL and AlloyDB. The pgvector extension can be installed within an existing instance of Cloud SQL for PostgreSQL and AlloyDB for PostgreSQL using the CREATE EXTENSION command as shown below. Let's see what our AI-generated taglines can do! How to install pgvector in Cloud SQL and AlloyDB for PostgreSQL Forget about boring “orange and white cat stuffed animal” descriptions of days past - with AI, you can now generate descriptions like “ferocious and cuddly companion to keep your little one company, from crib to pre-school”. Then, we’ll further push the creative limits of the application by teaching it to create new AI-generated product descriptions based on our existing dataset about children's toys. We’ll build a sample Python application that can understand and respond to human language queries about the data stored in your PostgreSQL database. You can also follow along with our guided tutorial video.

In this step-by-step tutorial, we will show you how to add generative AI features to your own applications with just a few lines of code using pgvector, LangChain and LLMs on Google Cloud. Cloud SQL for PostgreSQL and AlloyDB for PostgreSQL now support the pgvector extension, bringing the power of vector search operations to PostgreSQL databases.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed